Enterprise IT environments demand constant, uninterrupted access to data. When hundreds or thousands of users and applications request data concurrently, the underlying infrastructure must process these requests without latency spikes. This concurrency challenge requires robust architecture capable of routing, prioritizing, and fulfilling input/output (I/O) requests efficiently.

Network Storage Solutions are engineered to solve this exact problem. By separating storage resources from application servers, these systems centralize data management while distributing the access load. The mechanics of this process involve a combination of hardware capabilities, network protocols, and intelligent software algorithms working in tandem to prevent bottlenecks.

Understanding how these systems handle simultaneous traffic streams is critical for storage administrators and network engineers. This article breaks down the technical processes that allow NAS Storage environments and broader storage networks to process concurrent read and write operations, ensuring data integrity and continuous system availability across shared infrastructure.

The Architecture of Concurrent Data Access

Handling simultaneous traffic streams requires an architecture that can instantly interpret, organize, and execute overlapping commands. Shared infrastructure relies on specialized protocols and file systems to manage this heavy concurrency.

File-Level Operations and Locking Mechanisms

In a standard NAS Storage setup, data is accessed at the file level rather than the block level. When multiple users attempt to access the same file simultaneously, the operating system utilizes file locking mechanisms. Read locks permit multiple users to view a file concurrently, while write locks ensure that only one user can modify a file at any given time. This systematic locking prevents data corruption and ensures consistency across the shared volume.

Network Protocol Optimization

Modern Network Storage Solutions utilize advanced protocols like Network File System (NFS) and Server Message Block (SMB). These protocols are designed with multiplexing capabilities, allowing multiple independent data streams to travel over a single transmission control protocol (TCP) connection. By utilizing asynchronous I/O, the storage controller can acknowledge a request and immediately move on to the next one without waiting for the physical disk to complete the data retrieval.

Traffic Control Mechanisms in Shared Infrastructure

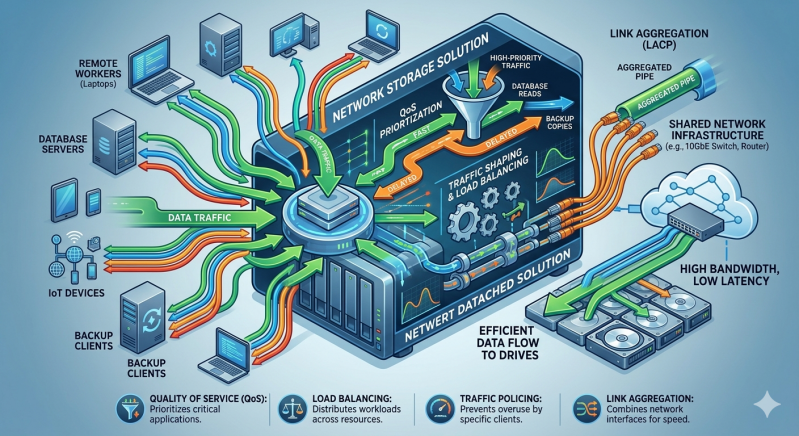

To prevent network congestion and storage controller overload, enterprise systems employ active traffic management strategies. These mechanisms ensure that critical applications receive the resources they require, even during peak utilization periods.

Quality of Service (QoS) Implementation

Quality of Service policies are foundational to modern Network Storage Solutions. Administrators assign I/O priority levels to specific applications, users, or data volumes. When simultaneous traffic streams saturate the available bandwidth, the storage controller references these QoS policies to queue and process packets. Mission-critical database queries might be prioritized over routine background backups, maintaining acceptable latency for tier-one applications.

Multipathing and Load Balancing

Enterprise environments rarely rely on a single physical connection between the server and the storage array. Multipathing software establishes redundant physical paths to the storage controller. When simultaneous traffic streams initiate, the load balancing algorithm distributes these streams dynamically across all available network interfaces. If one path becomes congested or fails completely, the software automatically reroutes the traffic to alternate paths without dropping the connection.

Hardware Acceleration and Data Tiering

Software algorithms require capable hardware to function effectively under heavy loads. The physical components within a NAS Storage array dictate the absolute limit of concurrent operations the system can process.

RAM and NVMe Caching

Storage controllers utilize massive pools of Random Access Memory (RAM) and Non-Volatile Memory Express (NVMe) solid-state drives as high-speed caches. When simultaneous read requests target the same frequently accessed data, the controller serves this data directly from the cache. This bypasses the slower spinning disks entirely, allowing the system to process thousands of concurrent requests in microseconds.

Automated Storage Tiering

For long-term traffic management, automated tiering analyzes access patterns over time. Frequently accessed data (hot data) is automatically moved to high-performance flash storage, while infrequently accessed data (cold data) resides on high-capacity hard drives. By distributing the data across different storage media based on access frequency, the system inherently reduces the likelihood of a single disk group becoming a bottleneck during periods of high simultaneous traffic.

Securing and Isolating Traffic Streams

Security and performance are closely linked in shared infrastructure. Network administrators use virtual local area networks (VLANs) to segment traffic at the network layer.

By isolating storage traffic from standard user traffic, administrators ensure that a sudden spike in general network activity does not impact storage performance. Furthermore, this isolation prevents unauthorized users from intercepting storage packets, adding a necessary layer of security to the NAS Storage environment.

Optimizing Your Storage Infrastructure

Effectively managing simultaneous traffic streams requires a comprehensive approach to both network and storage design. Hardware capabilities must align with software configurations to create a seamless flow of data.

As your organization scales, regularly audit your Network Storage Solutions to ensure QoS policies, multipathing configurations, and caching algorithms remain aligned with your current traffic patterns. Upgrading your NAS Storage with advanced NVMe caching or adjusting your network segmentation can yield significant performance improvements, ensuring your shared infrastructure continues to operate with maximum efficiency.

Add comment

Comments