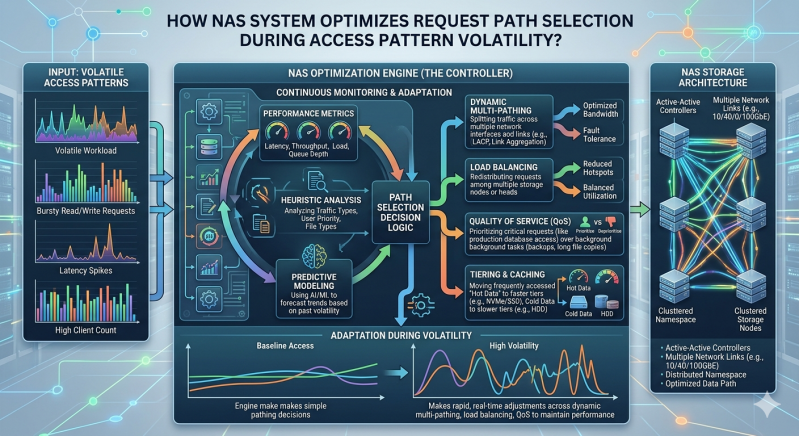

Network-attached storage environments face constant shifts in application behavior, user demand, and data ingestion rates. When workloads change unpredictably, storage architectures must adapt instantly to prevent bottlenecks. Access pattern volatility occurs when input/output (I/O) requests transition rapidly between sequential large-block transfers and random small-block operations. These sudden shifts can overwhelm static network configurations, causing increased latency and degraded application performance.

For IT administrators and storage architects, maintaining high availability and throughput during these erratic periods is a primary objective. A modern Nas System addresses this challenge by utilizing dynamic request path selection. Instead of relying on a single, fixed route for data packets, the architecture evaluates multiple network paths in real-time. This ensures that traffic is distributed optimally across available interfaces, preventing any single point of congestion from slowing down the entire infrastructure.

Understanding how an Enterprise nas Storage infrastructure executes this path optimization requires an examination of the underlying protocols, telemetry data, and load-balancing algorithms. By continuously monitoring the health and utilization of network links, storage controllers can make sub-second routing decisions. This systematic approach guarantees that data reaches its destination efficiently, regardless of how wildly the underlying access patterns fluctuate.

The Mechanics of Access Pattern Volatility

To appreciate how a Nas System optimizes data routing, one must first examine the nature of I/O volatility. Enterprise environments host a diverse array of applications. A database might execute millions of random, microsecond reads during peak transaction hours. Meanwhile, a backup application might trigger massive, sequential write operations on the same storage array. When these operations overlap, the resulting I/O blender effect creates severe access pattern volatility.

Recognizing I/O Contention

In traditional architectures, static routing protocols often force all traffic from a specific server through a designated host bus adapter (HBA) or network interface card (NIC) port. If a sudden burst of sequential writes saturates that specific path, subsequent random read requests from other applications queue up behind the large data payload. The storage array might have plenty of available IOPS (Input/Output Operations Per Second) on the backend, but the network path itself becomes the bottleneck.

An advanced Enterprise nas Storage array actively monitors these conditions. By tracking queue depths, packet drop rates, and transmission latency across all active interfaces, the system can identify exactly when and where contention is occurring.

Dynamic Path Selection in Enterprise Environments

To mitigate the effects of I/O contention, a robust Nas System employs dynamic path selection. This capability relies heavily on Multi-Path I/O (MPIO) frameworks and Asymmetric Logical Unit Access (ALUA) protocols, which allow the storage controller to present multiple active routes to the connected client or hypervisor.

Real-Time Telemetry and Analytics

Optimization begins with accurate, real-time telemetry. The Enterprise nas Storage controller continuously polls the status of all available network paths. It measures metrics such as round-trip time, bandwidth utilization, and switch port congestion. If the telemetry data indicates that a specific path is approaching its bandwidth threshold due to a burst of large-file transfers, the controller instantly registers this state change.

When access patterns are highly volatile, these state changes occur continuously. The storage infrastructure must process this telemetry data without adding compute overhead to the data path. Dedicated silicon or offload engines within the storage nodes typically handle this analytical workload, ensuring that the primary CPUs remain dedicated to serving client I/O requests.

Load Balancing Algorithms

Once the telemetry data is processed, the Nas System applies sophisticated load-balancing algorithms to determine the optimal route for incoming and outgoing data. Several distinct algorithmic approaches are utilized depending on the specific storage protocol (NFS, SMB, or iSCSI) and the administrative configuration:

- Least Queue Depth: The system evaluates the number of outstanding I/O requests on every available path. New requests are automatically routed to the path with the shortest queue. This is highly effective during periods of random I/O volatility, as it prevents micro-transactions from getting stuck behind large, sequential payloads.

- Weighted Round Robin: Paths are assigned a specific weight based on their total bandwidth capacity. The system distributes requests proportionally. If a 100GbE link and a 25GbE link are both active, the higher-capacity link receives a larger percentage of the traffic.

- Adaptive Load Balancing: The most advanced Enterprise nas Storage environments use predictive analytics. By analyzing historical access patterns alongside real-time data, the system anticipates congestion before it occurs and preemptively reroutes traffic.

Executing Sub-Second Path Failover

Path optimization is not merely about balancing active traffic; it is also about maintaining resilience. During extreme access pattern volatility, network switches or interface cards can experience transient errors or drop packets. A highly available Nas System interprets these transient errors as a degraded path.

Transparent Traffic Redirection

When a path degrades, the storage controller must redirect traffic without severing the application connection. Using protocols like SMB Multichannel or NFSv4.1 session trunking, the Enterprise nas Storage infrastructure can shift active sessions to alternate paths transparently.

The application layer remains completely unaware that the underlying physical routing has changed. The database continues to process queries, and the virtual machines continue to run smoothly. This transparent redirection is a critical component of maintaining strict Service Level Agreements (SLAs) in enterprise data centers.

Maintaining Stability in Unpredictable Architectures

Managing data flow in modern IT environments requires infrastructure that can think and adapt. Volatility in access patterns is no longer an edge case; it is the standard operating condition for consolidated data centers. Organizations cannot rely on static network configurations to handle the diverse and unpredictable demands of virtualized workloads, containerized applications, and real-time analytics.

By implementing dynamic path selection, telemetry-driven load balancing, and transparent session redirection, a modern Nas System ensures that storage networks remain fluid and responsive. Investing in intelligent Enterprise nas Storage infrastructure allows organizations to decouple their application performance from the limitations of physical network constraints. As workloads continue to scale and access patterns grow even more complex, this systematic approach to data routing will remain a foundational requirement for enterprise IT resilience.

Add comment

Comments