Enterprise data access is inherently unpredictable. While baseline workloads follow established schedules, anomalies occur frequently. A sudden software deployment, a randomized security audit, or an unexpected surge in user traffic can instantly alter read and write ratios. This phenomenon, known as access pattern redistribution, places immense stress on storage infrastructure. When an array calibrated for sequential throughput is suddenly bombarded by random I/O requests, latency spikes and application performance degrades.

To mitigate these disruptions, modern NAS storage solutions utilize dynamic architectures designed to absorb and adapt to volatile I/O patterns. Rather than relying on static volume provisioning, these systems deploy automated mechanisms to rebalance workloads in real time. Understanding how these protocols function is critical for storage engineers tasked with maintaining high availability and low latency during unpredictable events.

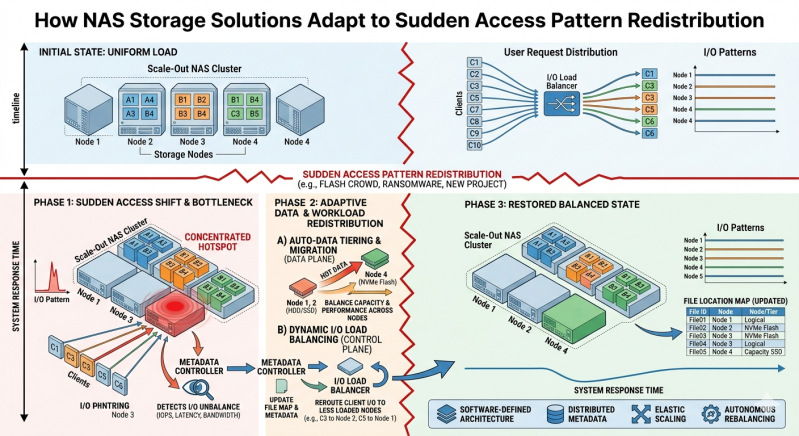

The Mechanics of Access Pattern Redistribution

Access pattern redistribution occurs when the fundamental geometry of data requests changes without warning. Storage arrays are typically optimized for specific profiles. For instance, backup operations generate large, sequential write operations. Database queries often generate small, highly randomized read operations. When a workload rapidly shifts from one profile to another, the underlying storage controllers must pivot their processing logic.

If a file system experiences a sudden influx of localized access—often termed a "hot spot"—the storage media holding those specific blocks can become an I/O bottleneck. Traditional static storage arrays struggle with these events because their caching algorithms and spindle resources are rigidly defined. Conversely, modern Network Attached storage ecosystems are engineered with abstraction layers that decouple logical data access from physical disk limitations. This allows the system to implement reactive protocols that neutralize bottlenecks before they impact the end user.

Dynamic Caching and Memory Allocation

The first line of defense against access pattern redistribution is the system cache. When Network Attached storage experiences a sudden spike in read requests for specific files, it leverages adaptive caching algorithms to serve data directly from high-speed memory.

Adaptive Replacement Cache (ARC)

Advanced NAS storage solutions frequently utilize algorithms like the Adaptive Replacement Cache (ARC) instead of legacy Least Recently Used (LRU) models. ARC dynamically balances between recently accessed data and frequently accessed data. If a workload suddenly shifts to repeatedly reading the same localized dataset, ARC adjusts its memory allocation to keep those specific blocks in primary cache. This prevents the controllers from repeatedly fetching data from slower backend disks, effectively neutralizing the I/O penalty of the new access pattern.

Write Coalescing

Sudden shifts in write patterns, particularly bursts of random writes, can overwhelm storage controllers. To manage this, NAS storage solutions employ write coalescing. The system captures random write requests in non-volatile random-access memory (NVRAM). The controller then organizes these fragmented requests into large, sequential stripes before committing them to the physical disks. This transforms a punishing random write workload into a highly efficient sequential operation, ensuring the backend disks remain uncongested.

Automated Storage Tiering

When an access pattern redistribution persists beyond a temporary spike, caching alone is insufficient. The data must be physically relocated to media that can sustain the required performance levels. Network Attached storage addresses this through automated storage tiering.

Sub-LUN Data Movement

Enterprise NAS systems divide data into granular blocks, often at the sub-LUN level. The storage controller continuously monitors the I/O temperature of these blocks. If an archived dataset on slow, high-capacity hard disk drives (HDDs) suddenly becomes active, the system's tiering engine identifies the increased I/O demand.

Real-Time Promotion and Demotion

Once the system detects the shifting access pattern, it automatically promotes the "hot" blocks to a higher-performance storage tier, such as NVMe or SAS solid-state drives (SSDs). This data movement occurs transparently in the background. As the hot blocks are promoted, cold blocks are simultaneously demoted to lower tiers to free up premium flash capacity. By physically relocating the data to match the new access pattern, NAS storage solutions ensure that high-priority applications receive the necessary IOPS without requiring manual intervention from a storage administrator.

Scale-Out Architecture and Load Balancing

Hardware limitations eventually bottleneck even the most efficient caching and tiering algorithms. When a sudden access shift generates more I/O than a single controller can process, scale-out Network Attached storage provides a structural advantage.

Distributed File Systems

Unlike traditional scale-up arrays relying on a dual-controller architecture, scale-out NAS operates as a cluster of independent nodes. All nodes share a single global namespace. When an access pattern shifts and creates a massive influx of traffic to a specific directory, the distributed file system can balance this load across the entire cluster.

Client Redirection and Network Optimization

If one node becomes saturated by a localized I/O spike, the storage operating system automatically redirects incoming client connections to nodes with available processing capacity. This lateral distribution of network traffic prevents localized network port saturation and ensures that CPU utilization remains balanced across the cluster hardware.

Enforcing Quality of Service (QoS)

Not all data is created equal, and not all sudden access shifts should be permitted to consume maximum system resources. When an aggressive, low-priority workload suddenly spikes, it can consume controller cycles and starve mission-critical applications.

To prevent this "noisy neighbor" problem, administrators utilize Quality of Service (QoS) policies inherent to enterprise Network Attached storage. QoS allows storage engineers to define strict maximums and minimums for IOPS, throughput, and latency on a per-volume or per-application basis. If a localized workload redistribution threatens to overwhelm the array, the QoS engine actively throttles the offending traffic. This guarantees that critical databases and core virtual infrastructure maintain predictable performance levels, regardless of how other access patterns fluctuate on the same physical hardware.

Maintaining Predictable Performance Under Load

The reality of managing enterprise data is that access patterns will inevitably change. A system's reliability is defined by how efficiently it processes these disruptions. Through a combination of adaptive memory algorithms, sub-LUN tiering, clustered load balancing, and strict QoS enforcement, modern NAS storage solutions transform unpredictable workloads into manageable data streams.

By automating the mechanical responses to I/O volatility, these storage architectures eliminate the need for reactive, manual provisioning. For IT organizations prioritizing continuous availability and stringent service level agreements, deploying adaptive storage infrastructure is a technical necessity. It ensures that the storage layer remains a robust, flexible foundation capable of supporting dynamic business applications under any operational conditions.

Add comment

Comments