Enterprise data centers face a persistent challenge: maintaining consistent data transfer rates during periods of peak traffic. When hundreds of users and automated applications simultaneously request access to large datasets, the resulting packet influx can easily overwhelm the available bandwidth. This creates data transfer congestion, leading to high latency, dropped packets, and degraded application performance.

Understanding the mechanisms required to manage these traffic spikes is critical for system administrators and network engineers. Without a systematic approach to traffic management, even the most expensive hardware can falter under the pressure of concurrent read/write requests. The architecture must include logical protocols to queue, route, and prioritize incoming and outgoing data.

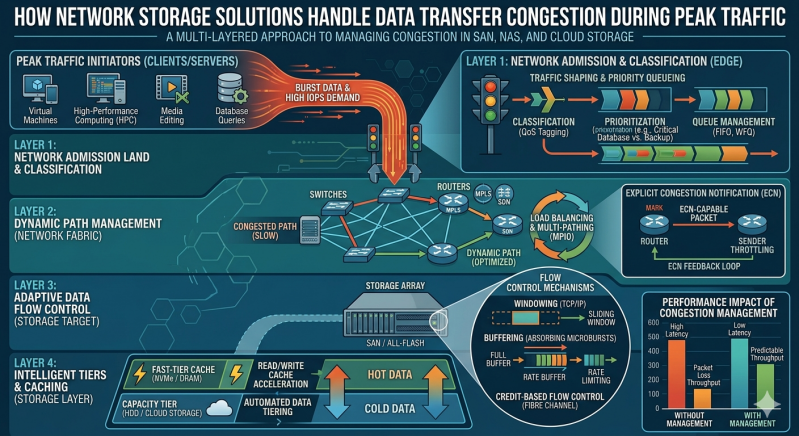

This article examines the specific technical strategies that modern Network Storage Solutions utilize to handle data transfer congestion. By exploring protocols like Quality of Service (QoS), link aggregation, and intelligent caching, readers will gain a comprehensive understanding of how to configure their infrastructure for maximum resilience.

The Mechanics of Peak Traffic Congestion

Data transfer congestion occurs when the volume of network traffic exceeds the maximum capacity of a network path or storage interface. During peak hours, multiple clients attempt to initiate input/output (I/O) operations simultaneously. The storage controller must process these requests, retrieve or write the data to the physical disks, and send acknowledgments back through the network switch.

When the arrival rate of these requests surpasses the processing rate of the storage controller or the throughput limit of the network interface cards (NICs), a bottleneck forms. Packets begin to queue in the buffers of the network switches and the storage arrays. If these buffers fill completely, subsequent packets are dropped, forcing the Transmission Control Protocol (TCP) to retransmit the lost data. This retransmission cycle compounds the original congestion, resulting in a severe drop in overall throughput.

Traffic Management in Network Storage Solutions

To prevent buffer exhaustion and packet loss, enterprise-grade Network Storage Solutions implement several layers of traffic management. These mechanisms do not simply add more bandwidth; they intelligently allocate the existing bandwidth to ensure continuous operation for critical workloads.

Quality of Service (QoS) Implementation

Quality of Service (QoS) is the foundational tool for managing data congestion within Network Storage Solutions. QoS allows administrators to define specific performance parameters for different types of data traffic. By assigning priority levels to different workloads, the storage system knows which packets to process first during periods of heavy congestion.

For example, a database supporting a live customer portal will be assigned a higher QoS priority than a routine background backup process. When peak traffic occurs, the storage controller throttles the bandwidth allocated to the backup process, ensuring the database I/O operations continue without noticeable latency. QoS policies can enforce strict limits on Input/Output Operations Per Second (IOPS) or total bandwidth, preventing any single application from monopolizing the system resources.

Link Aggregation and Multipathing

Physical bottlenecks are mitigated through Link Aggregation (LAG) and multipathing. Link Aggregation binds multiple physical network interfaces into a single logical channel. If a storage array has four 10GbE ports, LAG combines them into a single 40GbE logical pipe. This provides immediate relief during peak traffic by offering a wider data path.

Multipathing takes this a step further by establishing multiple independent routes between the servers and the Network Storage Solutions. Using protocols like Multipath I/O (MPIO), the system can detect congestion on one switch or cable and dynamically reroute the data packets through an alternate, less congested path. This active-active routing ensures high availability and balances the load across the entire physical infrastructure.

Optimizing NAS Storage for High Traffic

While block-level storage uses specific fiber channel protocols to manage traffic, file-level systems like NAS Storage employ their own specialized techniques to mitigate congestion. NAS Storage relies heavily on Ethernet networks, making it particularly susceptible to TCP/IP broadcast storms and heavy concurrent user access.

Caching and Tiering Strategies

One of the most effective ways NAS Storage handles peak loads is through intelligent caching. When multiple clients request the same file, retrieving it from the physical spinning disks (HDDs) repeatedly causes severe I/O bottlenecks. NAS Storage architectures utilize high-speed Solid State Drives (SSDs) or Non-Volatile Memory Express (NVMe) drives as an intermediate cache layer.

Frequently accessed data, known as "hot data," is temporarily held in this high-speed cache. When a peak traffic event occurs, the NAS Storage controller serves read requests directly from the cache, delivering sub-millisecond latency and bypassing the slower underlying disk arrays entirely. Write operations are also temporarily absorbed by the SSD cache and then sequentially written to the slower disks during off-peak hours, a process known as write-back caching.

Load Balancing Across Nodes

Modern scale-out NAS Storage systems distribute the data workload across multiple interconnected storage nodes. Instead of funneling all network traffic through a single controller head, a scale-out architecture allows client requests to be processed by any available node in the cluster.

During peak traffic loads, internal load balancers monitor the CPU and network utilization of each node. If one node experiences a disproportionate amount of traffic, incoming connections are automatically redirected to nodes with available capacity. This horizontal scaling ensures that no single point in the NAS Storage environment becomes overwhelmed, maintaining smooth data transfers regardless of user demand.

Advanced Protocol Enhancements

Beyond hardware configurations and basic routing, the underlying file sharing protocols have evolved to handle congestion more efficiently.

SMB Multichannel and NFSv4 Features

Server Message Block (SMB) 3.0 introduced SMB Multichannel, a feature that allows file servers to utilize multiple network connections simultaneously. If a client and a storage array both possess multiple NICs, SMB Multichannel automatically detects them and establishes multiple parallel TCP connections. This multiplies the available bandwidth and provides inherent fault tolerance.

Similarly, Network File System version 4 (NFSv4) includes advanced delegation and compound Remote Procedure Call (RPC) features. Delegation allows the storage server to grant a client local control over a file, reducing the constant back-and-forth network chatter normally required for file locking and access verification. Compound RPCs allow multiple file operations to be bundled into a single network request, significantly reducing the overhead and easing congestion on the network wires.

Future-Proofing Your Storage Architecture

Data transfer congestion will remain a critical variable as organizational data footprints continue to expand. Relying on raw bandwidth is no longer a viable strategy for enterprise environments. Administrators must actively configure QoS policies, utilize SSD caching tiers, and implement multipathing to maintain reliable performance.

To ensure your infrastructure is prepared for incoming traffic spikes, begin by auditing your current I/O metrics during known peak hours. Identify the specific bottlenecks in your data path, and evaluate whether your current architecture supports advanced load balancing and protocol enhancements. By systematically applying these technical configurations, you can build a resilient storage network capable of handling any workload.

Add comment

Comments