Network Attached Storage architecture serves as the foundational data repository for modern IT infrastructure. As organizations scale their data footprint, administrators frequently observe a significant variance in how these storage arrays handle different types of data retrieval. Specifically, a profound performance divergence emerges when comparing sequential read operations to random access workloads. Understanding the mechanical and architectural reasons behind this divergence is critical for designing efficient storage solutions.

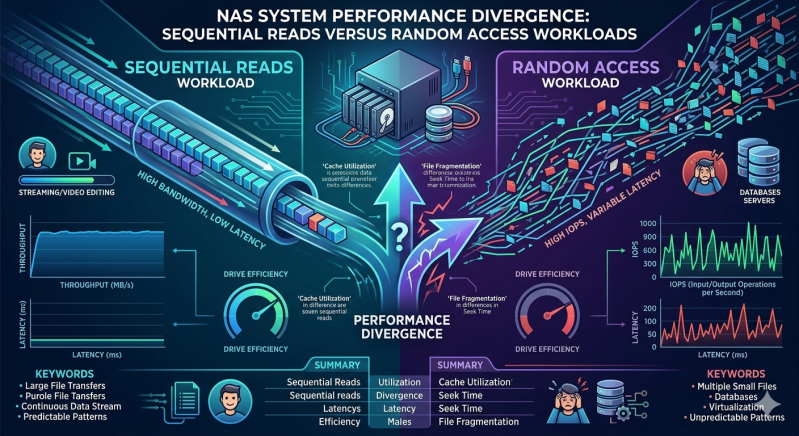

At the core of this disparity is the physical and logical methodology used to write and retrieve data. Sequential workloads involve reading large, contiguous blocks of data, such as streaming a massive video file or performing a comprehensive system backup. Random access workloads require the storage controller to fetch small, scattered blocks of data from various physical locations across the disk array. This behavior is typical in virtualization environments, database transactions, and active directory queries.

Failing to account for these differing I/O patterns can lead to severe bottlenecks. When deploying Nas Systems, IT architects must rigorously evaluate the anticipated workload profile. This article examines the technical drivers behind performance divergence in Nas Systems and offers actionable strategies to optimize your Enterprise nas Storage for mixed workload environments.

Understanding Sequential Read Operations

Sequential reads allow storage media to operate at maximum efficiency. When an application requests a contiguous file, the storage controller calculates the exact physical location of the initial data block. The read head on a traditional hard disk drive (HDD) moves to the correct track once and reads the data as the platter spins continuously beneath it.

Because the data is organized sequentially, there is minimal mechanical latency. The seek time and rotational latency are incurred only at the very beginning of the operation. Consequently, sequential performance is generally measured in terms of throughput (megabytes or gigabytes per second). Nas Systems excel at these operations because the predictable nature of the data request allows the storage controller to utilize aggressive read-ahead caching algorithms. The controller anticipates the next required blocks and pre-fetches them into high-speed RAM, ensuring the network interface is consistently saturated with data.

The Mechanics of Random Access Workloads

Conversely, random access workloads fundamentally disrupt the efficiency of the storage subsystem. When an application requests disparate, non-contiguous data blocks, the storage controller must constantly calculate new physical locations. For mechanical drives, this means the actuator arm must physically move to a new track (seek time) and wait for the correct sector to spin under the head (rotational latency) for every single I/O request.

This constant mechanical repositioning introduces severe latency. Instead of throughput, random access performance is measured in Input/Output Operations Per Second (IOPS). Even in Enterprise nas Storage environments utilizing solid-state drives (SSDs), which lack moving parts, random access still incurs logical overhead. The flash controller must navigate the flash translation layer (FTL) to map logical block addresses to physical pages scattered across different NAND chips. Additionally, file system overhead and network protocol inefficiencies (such as SMB or NFS locking mechanisms) compound the latency, heavily degrading overall performance.

Architectural Factors Driving Performance Divergence

Several critical architectural components within Nas Systems dictate the severity of the performance gap between sequential and random workloads.

Storage Media and RAID Configurations

The physical media is the primary bottleneck. A 7200 RPM HDD might deliver 150 MB/s of sequential throughput but struggle to achieve 100 IOPS during random reads. Grouping drives into RAID arrays alters this dynamic. A RAID 0 or RAID 10 configuration can significantly boost random IOPS by striping data across multiple spindles, allowing concurrent read operations. However, parity-based configurations like RAID 5 or RAID 6 introduce a "write penalty" that, while primarily affecting writes, can also impact read performance during volume rebuilds or heavy mixed-workload contention.

File Systems and Metadata Overhead

Enterprise nas Storage arrays rely on advanced file systems like ZFS, Btrfs, or proprietary architectures (e.g., WAFL). These file systems utilize extensive metadata to manage permissions, deduplication, and snapshots. In a random access workload, the storage controller must frequently read scattered metadata before it can even locate the requested data block. This double-read requirement exacerbates the IOPS bottleneck.

Network Protocols and Latency

The network layer introduces its own variables. TCP/IP overhead, packet processing, and protocol chattiness affect performance. Sequential reads benefit from large Maximum Transmission Units (MTU), such as Jumbo Frames, allowing massive payloads per packet. Random small-block I/O generates an immense volume of tiny packets, increasing CPU interruptions on the storage controller and amplifying protocol latency.

Mitigating the Divergence in Enterprise NAS Storage

To align performance with organizational requirements, storage architects must deploy targeted optimization techniques.

The most effective method for bridging the performance gap is implementing a tiered storage architecture. By integrating NVMe or SATA SSDs as an intelligent caching layer in front of high-capacity HDDs, Enterprise nas Storage can intercept random I/O requests. Hot data—frequently accessed random blocks—is promoted to the flash tier, delivering high IOPS and low latency. Sequential workloads, which do not benefit as significantly from flash media, bypass the cache and stream directly from the spinning disks.

Furthermore, optimizing the network fabric is non-negotiable. Implementing Remote Direct Memory Access (RDMA) over Converged Ethernet (RoCE) allows the network adapter to transfer data directly to application memory, bypassing the CPU and OS kernel. This drastically reduces the latency associated with processing thousands of random I/O requests.

Frequently Asked Questions (FAQ)

Why do traditional Nas Systems struggle with database workloads?

Databases rely heavily on transactional, random access read and write operations across highly fragmented tables. Traditional Nas Systems utilizing mechanical drives cannot physically reposition read heads fast enough to support the required IOPS, leading to high latency and application timeouts.

How does block size affect performance divergence?

Large block sizes (e.g., 1MB or higher) align perfectly with sequential reads, maximizing throughput. Random access workloads typically utilize small block sizes (e.g., 4KB or 8KB). Processing millions of small blocks requires vastly more CPU cycles and metadata lookups than processing a few large blocks.

Can SSDs eliminate the sequential vs. random performance gap?

While SSDs drastically reduce random access latency by eliminating mechanical seek times, a divergence still exists. Flash media controllers process sequential data more efficiently than random data due to internal NAND architecture and garbage collection mechanics. Therefore, Enterprise nas Storage utilizing all-flash arrays will still show higher throughput for sequential tasks compared to random tasks.

Optimizing Your Storage Infrastructure

Addressing the performance divergence in network storage requires a precise, data-driven approach. Administrators must profile their applications to understand the exact ratio of sequential throughput to random IOPS required by their infrastructure. Relying purely on raw capacity metrics will inevitably result in degraded performance during peak operational hours.

Audit your current I/O profiles using native performance monitoring tools. If your environment is constrained by random access latency, consider transitioning to a hybrid flash architecture or re-evaluating your RAID topologies. By systematically aligning your hardware architecture with your logical workloads, you ensure your Enterprise nas Storage can deliver predictable, high-speed data availability across all enterprise applications.

Add comment

Comments