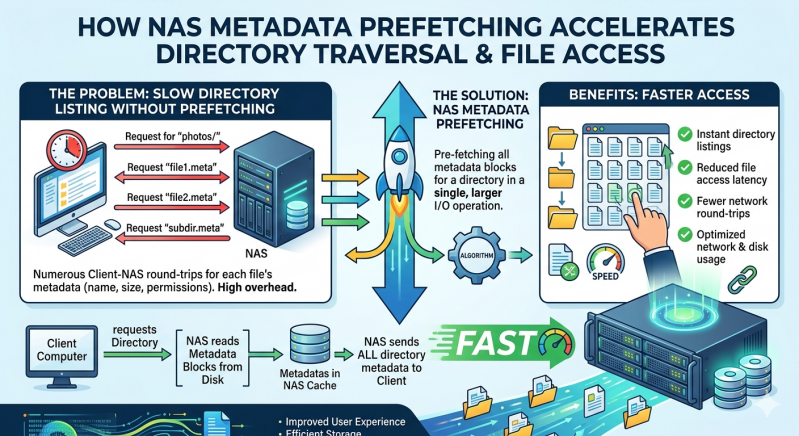

Network Attached Storage systems face significant performance challenges when handling massive directory structures. Directory traversal requires the storage controller to read metadata for every file in a path before accessing the target data. This sequential operation creates severe latency, especially in environments containing millions of files. Metadata prefetching solves this fundamental issue by anticipating access patterns and loading metadata into cache memory ahead of the actual client request.

This blog explains the technical mechanics of metadata prefetching within modern storage architectures. We will examine how predictive caching strategies eliminate directory traversal bottlenecks and accelerate file operations.

System administrators and storage architects will gain a comprehensive understanding of how to optimize data access performance. By implementing these advanced caching techniques, organizations can ensure their storage infrastructure meets the rigorous demands of enterprise workloads and high-performance computing applications.

The Mechanics of Metadata Prefetching

Metadata includes essential file attributes such as permissions, creation dates, and physical disk locations. To access a specific file, the system must first read the metadata of the root directory, followed by the metadata of every subdirectory in the path.

Overcoming Directory Traversal Bottlenecks

When a client requests a file deep within a complex directory tree, the NAS Storage system must perform multiple independent read operations just to locate the target data blocks. Each of these read operations incurs storage media latency. Metadata prefetching mitigates this sequential penalty. By utilizing predictive algorithms, the storage controller identifies sequential read patterns or common directory scanning behaviors. It then proactively retrieves the metadata for subsequent files or adjacent directories into high-speed RAM.

When the client application eventually requests the next file in the sequence, the NAS Storage system serves the metadata directly from the memory cache. This eliminates the need for an additional disk read operation. The result is a drastic reduction in overall latency and a massive increase in file operations per second.

Predictive Caching in Distributed Environments

This prefetching process is particularly critical for scale out NAS environments. A scale out NAS architecture distributes data and metadata across multiple discrete storage nodes connected via a backend network. While this architecture provides massive capacity and throughput, it complicates directory traversal.

Without prefetching, traversing directories across multiple nodes in a scale out NAS introduces severe network latency on top of standard disk latency. Predictive metadata caching allows the scale out NAS cluster to mask this interconnect latency. By fetching metadata from remote nodes into the local cache of the node servicing the client request, the scale out NAS delivers localized performance levels for distributed data sets.

Architectural Advantages for Enterprise Workloads

Different storage architectures handle metadata differently. Understanding these differences helps storage engineers select the appropriate solution for their specific application requirements.

Enhancing High-Performance Computing

High-performance computing (HPC) workloads, such as genomic sequencing or electronic design automation, heavily depend on rapid directory traversal. These applications often create, read, and delete millions of small temporary files in rapid succession. Traditional NAS Storage struggles to maintain the necessary metadata throughput for these workloads.

By implementing aggressive metadata prefetching, an advanced NAS Storage system can keep the compute cluster fully utilized. The prefetching algorithms analyze the behavior of the HPC application, recognizing predictable access patterns. This allows the scale out NAS to pre-load the necessary inodes and directory entries, ensuring the compute nodes never sit idle waiting for storage responses.

Comparing Storage Solutions

While file-based storage relies heavily on metadata management, block storage systems operate differently. For example, Azure disk storage provides raw block-level volumes directly to virtual machines. Because Azure disk storage does not manage a file system natively, it does not perform directory-level metadata prefetching itself. Instead, the guest operating system installed on the virtual machine running on top of the Azure disk storage handles the file system metadata.

There are distinct use cases for both approaches. Organizations often deploy scale out NAS for shared file repositories, home directories, and collaborative workflows where multiple users need simultaneous access to the same directory structure. Conversely, they utilize Azure disk storage for transactional databases or applications that require dedicated, low-latency block access without the overhead of network file protocols.

In hybrid cloud deployments, engineers frequently combine these technologies. A virtualized scale out NAS appliance might be deployed within the cloud environment, utilizing underlying Azure disk storage to store the actual data blocks while the NAS software layer manages the metadata prefetching and file sharing protocols. This combination leverages the durability of Azure disk storage with the advanced file management capabilities of enterprise NAS.

Future-Proofing Data Access Workflows

Data volumes continue to grow at an unprecedented rate, resulting in increasingly complex directory structures. Storage administrators must implement systems capable of handling this metadata explosion without compromising access speeds. Metadata prefetching remains a highly effective mechanism for masking latency and improving directory traversal efficiency.

By understanding how caching algorithms operate within a NAS Storage environment, IT teams can better architect their infrastructure. Whether deploying an on-premises scale out NAS or leveraging cloud infrastructure with Azure disk storage, optimizing the metadata path is essential for maintaining application performance and ensuring a responsive user experience. Evaluating your current storage controller's caching capabilities is the first step toward building a more efficient and scalable data center.

Add comment

Comments