Consistent performance is the foundation of modern data infrastructure. When applications request data, storage architectures must deliver that information with minimal latency. A dedicated cache layer plays a critical role in accelerating these read operations. However, cache capacity is strictly finite. When the cache fills up, the system must decide which data to retain and which to discard.

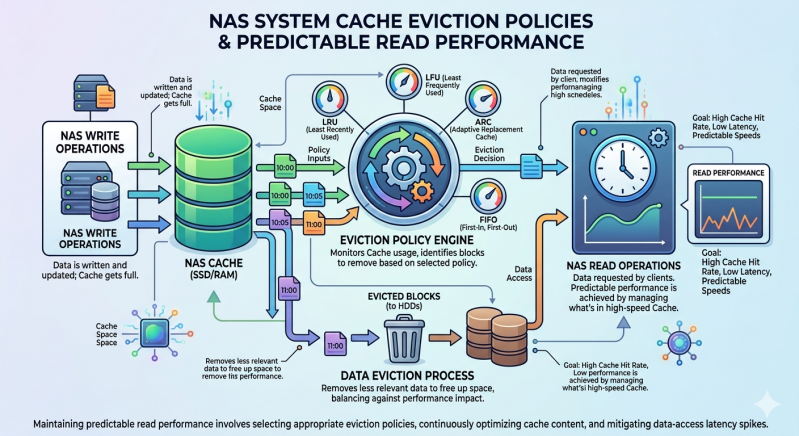

This decision process relies on cache eviction policies. These algorithms dictate exactly how a Nas System handles data turnover. Proper cache management ensures that the most relevant data remains in high-speed memory, directly resulting in predictable read performance. If a storage environment uses inefficient eviction logic, administrators will observe severe latency spikes and degraded application responsiveness.

Understanding how these policies function allows storage architects to design better environments. By exploring the mechanics of cache eviction, organizations can configure their infrastructure to handle demanding workloads without compromising speed or reliability.

The Role of Cache in a Nas System

A standard Nas System utilizes multiple storage tiers. The primary storage often consists of high-capacity hard disk drives or standard solid-state drives. While these provide vast space, retrieving data directly from them takes time. To bridge the latency gap, the system places a layer of high-speed memory—typically RAM or NVMe flash—between the storage media and the computing environment.

When a user or application requests a file, the system checks this cache first. A cache hit occurs when the requested data is already present in this high-speed layer, allowing for near-instantaneous retrieval. A cache miss means the system must fetch the data from the slower primary storage, write it to the cache, and then serve it to the user.

The primary goal of any Enterprise nas Storage environment is to maximize the cache hit ratio. A high hit ratio guarantees low latency and predictable read performance. Because the cache cannot hold all the data present on the primary drives, the system must continuously rotate data in and out. This is where eviction policies become essential.

Core Cache Eviction Algorithms

Different workloads require different approaches to data retention. Enterprise nas Storage platforms utilize several established algorithms to manage this process effectively.

Least Recently Used (LRU)

The Least Recently Used (LRU) policy is one of the most common eviction methods. It operates on a simple principle: data accessed most recently is likely to be accessed again soon. The system maintains a timeline of data access. When the cache reaches maximum capacity, the algorithm identifies and evicts the data that has sat unaccessed for the longest period.

LRU works exceptionally well for standard file-sharing workloads. Users typically open and modify the same set of documents over a few days before moving on to new projects. However, LRU struggles with sequential database scans, where a massive volume of data is read once and never accessed again. In these scenarios, the scan can flush out important, frequently accessed data, causing a sharp drop in read performance.

First-In, First-Out (FIFO)

The First-In, First-Out (FIFO) algorithm treats the cache strictly as a queue. The first block of data written to the cache is the first block evicted when space is needed, regardless of how often applications access it.

While computationally lightweight and easy for a Nas System to execute, FIFO is rarely used as a primary caching mechanism in high-performance environments. It ignores access frequency entirely. An essential system file loaded early might be evicted simply because it is old, forcing the system to retrieve it from slow storage again.

Adaptive Replacement Cache (ARC)

To overcome the limitations of standard LRU, advanced Enterprise nas Storage solutions often implement the Adaptive Replacement Cache (ARC) algorithm. ARC dynamically balances two separate lists: one tracking recently accessed data and another tracking frequently accessed data.

If a workload suddenly shifts from random file access to a heavy sequential read, ARC adjusts the cache distribution on the fly. It protects frequently used data from being flushed out by one-time sequential reads. This adaptability makes ARC highly effective at maintaining predictable read performance across highly variable enterprise workloads.

The Impact of ISCSI NAS Architectures

Storage protocols significantly influence how cache eviction functions. An ISCSI NAS environment handles data at the block level rather than the file level. This fundamental difference changes how the system tracks access patterns.

When a server connects to an ISCSI NAS, it views the storage as a raw, unformatted local drive. The caching algorithms must evaluate read and write requests for specific data blocks without any knowledge of the underlying files.

Because an ISCSI NAS operates at this low level, cache eviction must be highly aggressive and precise. Virtual machine deployments heavily utilize block-level storage. A single host might run dozens of virtual machines, generating a massive, randomized blend of read requests. Cache algorithms in an ISCSI NAS must rely heavily on frequency-based tracking (like ARC) rather than simple recency. If the system miscalculates and evicts the wrong blocks, the virtual machines will experience severe input/output wait times, halting critical services.

Achieving Predictable Read Performance

Maintaining predictable read performance requires more than just selecting a policy. Administrators must actively monitor cache hit rates and adjust hardware resources accordingly.

If a Nas System consistently reports a low cache hit ratio despite utilizing an advanced algorithm like ARC, the underlying issue might simply be a lack of physical cache capacity. Workloads evolve. A database that fit comfortably into a 64GB cache layer two years ago may now require 128GB to maintain the same performance metrics.

Furthermore, tiered caching structures offer another layer of predictability. Many systems use a small pool of volatile RAM as the primary cache (Level 1) and a larger pool of fast NVMe storage as a secondary cache (Level 2). When the primary cache evicts data, it moves to the secondary cache rather than being discarded entirely. This tiered approach gives Enterprise nas Storage setups a safety net, ensuring that even if data falls out of the fastest memory, it remains quickly accessible.

Frequently Asked Questions (FAQ)

How do I know if my cache eviction policy is failing?

The most obvious symptom is unpredictable latency. If applications occasionally experience massive delays during standard read operations, your cache hit ratio is likely dropping. Monitoring tools within your storage software can confirm whether cache misses are spiking during these periods.

Can I manually configure cache eviction on my storage?

This depends heavily on the vendor. Some advanced operating systems, like TrueNAS utilizing ZFS, allow administrators to tune parameters related to ARC behavior. However, many turnkey systems manage eviction automatically to prevent misconfiguration.

Does block-level storage require different caching than file-level?

Yes. As seen with an ISCSI NAS, block-level storage deals with raw data fragments. Algorithms must handle highly randomized IOPS efficiently. File-level storage (like SMB or NFS) benefits from caching entire files and can often utilize simpler read-ahead logic.

Will adding more RAM fix my read performance?

Often, yes. Expanding the physical cache size allows the system to hold more data simultaneously, reducing the frequency of eviction events. However, if your workload consists entirely of randomized, one-time reads, no amount of RAM will completely eliminate cache misses.

Optimizing Your Storage Strategy

Predictable read performance does not happen by accident. It is the direct result of intelligent data management. By controlling how and when data leaves high-speed memory, cache eviction policies protect the operational integrity of the entire infrastructure.

Whether you are managing a standard file repository or a high-throughput ISCSI NAS supporting critical virtual machines, understanding these algorithms is mandatory. Evaluate your current storage performance, analyze your workload access patterns, and ensure your cache layer is properly equipped to handle the demands of your applications.

Add comment

Comments