Data generation within enterprise environments continues to expand at an exponential rate. Organizations face the complex challenge of storing, managing, and retrieving this data efficiently. IT administrators must balance high-performance computing requirements against strict budgetary constraints. Traditional storage architectures often force a compromise, requiring administrators to over-provision expensive flash storage or accept unacceptable latency from slower disk drives.

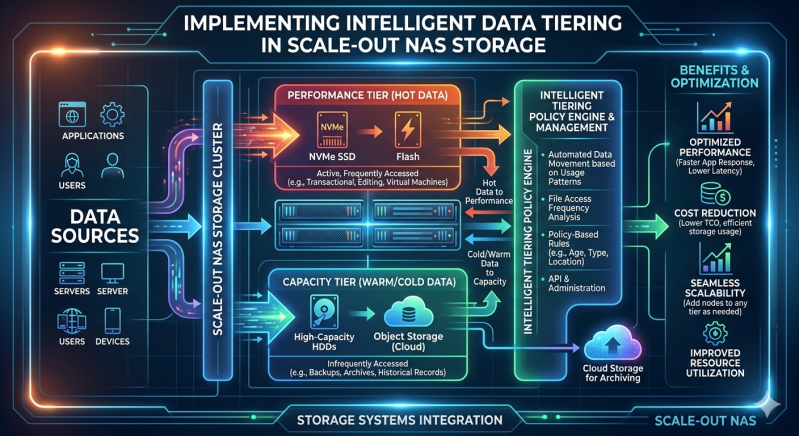

Intelligent data tiering provides a systematic solution to this architectural challenge. This process automatically migrates files between different storage media based on usage patterns, access frequency, and predefined administrative policies. Hot data remains on high-performance drives for immediate access. Cold data systematically moves to high-capacity, lower-cost drives.

Implementing this optimization requires a robust and flexible infrastructure. Administrators must deploy architectures capable of supporting dynamic data mobility without disrupting end-user access. This is where advanced storage solutions become critical to enterprise operations.

Understanding Data Classification: Hot vs. Cold

Effective data tiering requires a precise understanding of data life cycles. Information rarely maintains the same value or access frequency throughout its existence. Recognizing these access patterns is the first step in storage optimization.

Characteristics of Hot Data

Hot data consists of actively accessed information. This includes transactional databases, active project files, and currently running virtual machines. Applications require immediate, low-latency access to this data to maintain operational efficiency. Consequently, hot data belongs on the highest-performing storage media available, such as Non-Volatile Memory Express (NVMe) or solid-state drives (SSDs).

Characteristics of Cold Data

Cold data represents information that users or applications rarely access. Examples include completed project archives, historical compliance records, and outdated backups. While organizations must retain this information for regulatory or historical purposes, maintaining it on premium flash storage wastes valuable IT resources. Cold data should reside on economical storage tiers, such as high-capacity hard disk drives (HDDs) or object storage.

The Role of Scale Out NAS Storage

Legacy storage architectures often rely on scale-up models, where administrators add storage capacity to a single, fixed controller. This approach eventually creates severe performance bottlenecks. A modern approach utilizes Scale out NAS Storage to distribute workloads across multiple interconnected nodes.

Scale out NAS Storage architectures allow organizations to add capacity and performance linearly. When administrators add a new node to the cluster, the system automatically redistributes the data and the processing load. This architecture is fundamental to intelligent tiering. A robust Scale out NAS Storage environment seamlessly integrates different types of storage nodes within a single namespace.

Users and applications access files through a unified directory structure. The Scale out NAS Storage infrastructure handles the physical location of the data in the background. This abstraction layer is what makes automated tiering possible without disrupting application paths or user workflows.

Mechanics of Intelligent Data Tiering

Intelligent data tiering relies on continuous metadata analysis. The storage infrastructure monitors file access patterns in real-time. When administrators implement a tiering strategy, they define specific policies that dictate data movement.

Automated Policy Management

A centralized NAS System utilizes policy engines to automate data placement. Administrators configure rules based on file age, size, owner, or last access date. For example, a policy might dictate that any file untouched for 90 days automatically moves from the flash tier to the HDD tier. The NAS System continuously scans file metadata against these policies. When a file meets the criteria, the system schedules the migration.

This automation eliminates manual data management. IT staff no longer need to spend hours identifying inactive files and migrating them manually. The NAS System handles the entire process efficiently during off-peak hours to preserve network bandwidth.

Performance and Cost Benefits

Deploying intelligent tiering within a NAS System delivers measurable financial and operational advantages. Organizations minimize their investment in expensive SSDs by reserving them strictly for active workloads. Simultaneously, they leverage the cost-efficiency of high-capacity HDDs for bulk data retention.

Furthermore, reading a cold file automatically promotes it back to the hot tier. If a user suddenly needs an archived project file, the NAS System detects the read request and moves the file back to the flash tier for optimal performance. This dynamic mobility ensures applications always receive the necessary input/output operations per second (IOPS).

Best Practices for Implementing Tiering

Organizations must approach data tiering systematically to achieve optimal results. Proper planning prevents misconfigurations that could degrade application performance.

First, administrators must profile their existing data. Before configuring a NAS System, IT teams should deploy analytics tools to determine the precise ratio of hot to cold data. Most enterprises discover that 70 to 80 percent of their data is cold. This baseline metric dictates how much flash versus disk capacity the organization needs to purchase.

Second, configure conservative tiering policies initially. When deploying Scale out NAS Storage, administrators should start with generous retention periods on the hot tier. Setting an aggressive policy, such as moving data after only seven days of inactivity, can cause "data thrashing." Thrashing occurs when the system constantly moves the same files back and forth between tiers, consuming processing power and network bandwidth.

Finally, monitor tiering operations continuously. A well-optimized Scale out NAS Storage environment requires regular audits. Administrators should review tiering logs to verify that data moves according to the defined rules. If the hot tier consistently reaches maximum capacity, the organization may need to adjust its policy parameters or add an additional high-performance node.

Optimizing Your Storage Infrastructure

Intelligent data tiering transforms passive storage repositories into dynamic, cost-effective assets. By automatically aligning data value with storage costs, organizations maximize their hardware investments. Evaluate your current storage infrastructure to identify potential bottlenecks. Consult with your storage engineering team to conduct a comprehensive data profile. Implement automated policies that reflect your specific business workflows, and ensure your underlying architecture can scale seamlessly as your data requirements evolve.

Add comment

Comments