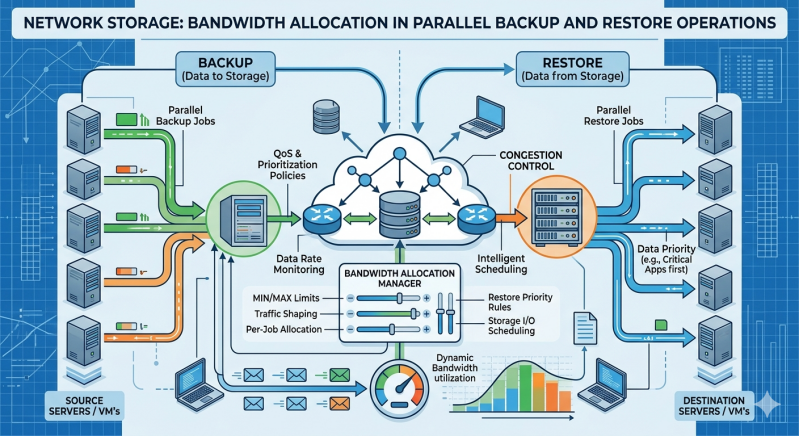

Organizations rely on continuous data availability and robust disaster recovery frameworks. Executing parallel backup and restore operations simultaneously demands significant network resources. When these processes run concurrently, they can easily saturate network links, causing severe latency for production applications.

Modern Network Storage Solutions resolve this challenge through intelligent bandwidth management. System administrators must understand how these mechanisms operate to maintain network performance during peak input/output (I/O) loads. By implementing systematic controls, IT teams can ensure data protection processes operate transparently in the background.

The Impact of Concurrent Data Operations

Backing up large datasets while restoring critical systems creates a heavy bidirectional traffic flow. Without proper management, transmission control protocol (TCP) overhead and raw data transfer will overwhelm standard network interfaces.

Network Attached storage (NAS) devices and enterprise storage area networks address this by implementing granular control mechanisms. These controls prevent administrative tasks from degrading the performance of user-facing applications. When a server requests a massive data restoration at the exact moment a scheduled system backup begins, the storage controller must immediately determine how to route and prioritize the packets.

Quality of Service (QoS) and Traffic Shaping

The foundation of bandwidth management lies in established networking protocols designed to organize traffic flow.

Priority-Based Queuing

Quality of Service (QoS) is a core feature in modern Network Storage Solutions, enabling administrators to classify and prioritize data packets effectively. Production application traffic typically receives the highest priority. Backup streams are assigned lower priority, ensuring they only consume available bandwidth without interrupting critical services. During a parallel restore, administrators can elevate the restore traffic priority to minimize system downtime, temporarily throttling the regular background backups.

Strict Rate Limiting

Traffic shaping enforces hard limits on data transfer rates. Storage controllers can restrict backup jobs to a specific throughput threshold, such as 500 megabits per second (Mbps) on a 10 gigabit per second (Gbps) link. This configuration guarantees that 9.5 Gbps remains constantly available for database queries, file access, and other essential operations.

Dynamic Bandwidth Throttling

Static limits often result in underutilized networks during off-peak hours. Advanced storage arrays solve this by adapting to the environment in real time.

Load-Based Adjustments

Modern Network Attached storage systems utilize dynamic throttling algorithms. These systems constantly monitor real-time network latency and switch port congestion. If the network is completely idle, the storage array allows the backup and restore operations to consume maximum available bandwidth to complete the jobs rapidly. Once user traffic spikes or production applications demand resources, the system automatically dials back the replication traffic to a sustainable level.

Scheduled Allocation

Time-based policies provide predictable and reliable bandwidth control. Administrators configure Network Storage Solutions to operate without limits during nighttime hours or weekends. During standard business hours, the system applies strict bandwidth caps to parallel operations, protecting the user experience.

Data Reduction Techniques

Bandwidth management is not strictly limited to networking protocols. Reducing the actual payload sent across the wire is a highly effective optimization strategy.

Inline Deduplication

Before transmitting backup data across the network, intelligent storage systems evaluate the data blocks. If a specific block of data already exists on the target server, the system transmits a tiny metadata pointer instead of the full file. This significantly reduces the bandwidth required for large-scale parallel backups.

Compression Algorithms

Data compression shrinks the payload size before it leaves the source network interface card (NIC). Network Attached storage devices utilize hardware-accelerated compression to minimize the data footprint in transit. While this increases CPU utilization on the storage nodes, it drastically lowers the bandwidth consumption on the network switches.

Architectural Solutions: Segmentation and Link Aggregation

Software controls work best when supported by a properly designed physical and logical network architecture.

Virtual Local Area Networks (VLANs)

Logical isolation prevents backup traffic from interfering with user data. By placing Network Attached storage replication interfaces on dedicated backup VLANs, network engineers physically and logically separate the traffic domains. The routing tables ensure that heavy parallel operations never cross paths with latency-sensitive application traffic.

Multipathing and LACP

Link Aggregation Control Protocol (LACP) bundles multiple physical network ports into a single logical channel. If a parallel backup and restore operation requires 20 Gbps of throughput, standard single-link connections will drop packets. LACP distributes the load across multiple physical links, providing the necessary capacity and adding critical redundancy to the storage infrastructure.

Securing Operational Continuity

Managing bandwidth during parallel data operations requires a layered, systematic approach. Relying on a single method often leaves networks vulnerable to congestion and packet loss. By combining QoS protocols, dynamic throttling algorithms, data reduction techniques, and physical network segmentation, organizations ensure their storage infrastructure performs reliably under heavy stress. Evaluate your current Network Storage Solutions to confirm they support these granular bandwidth controls. Proper configuration protects production performance while maintaining strict recovery time objectives.

Add comment

Comments